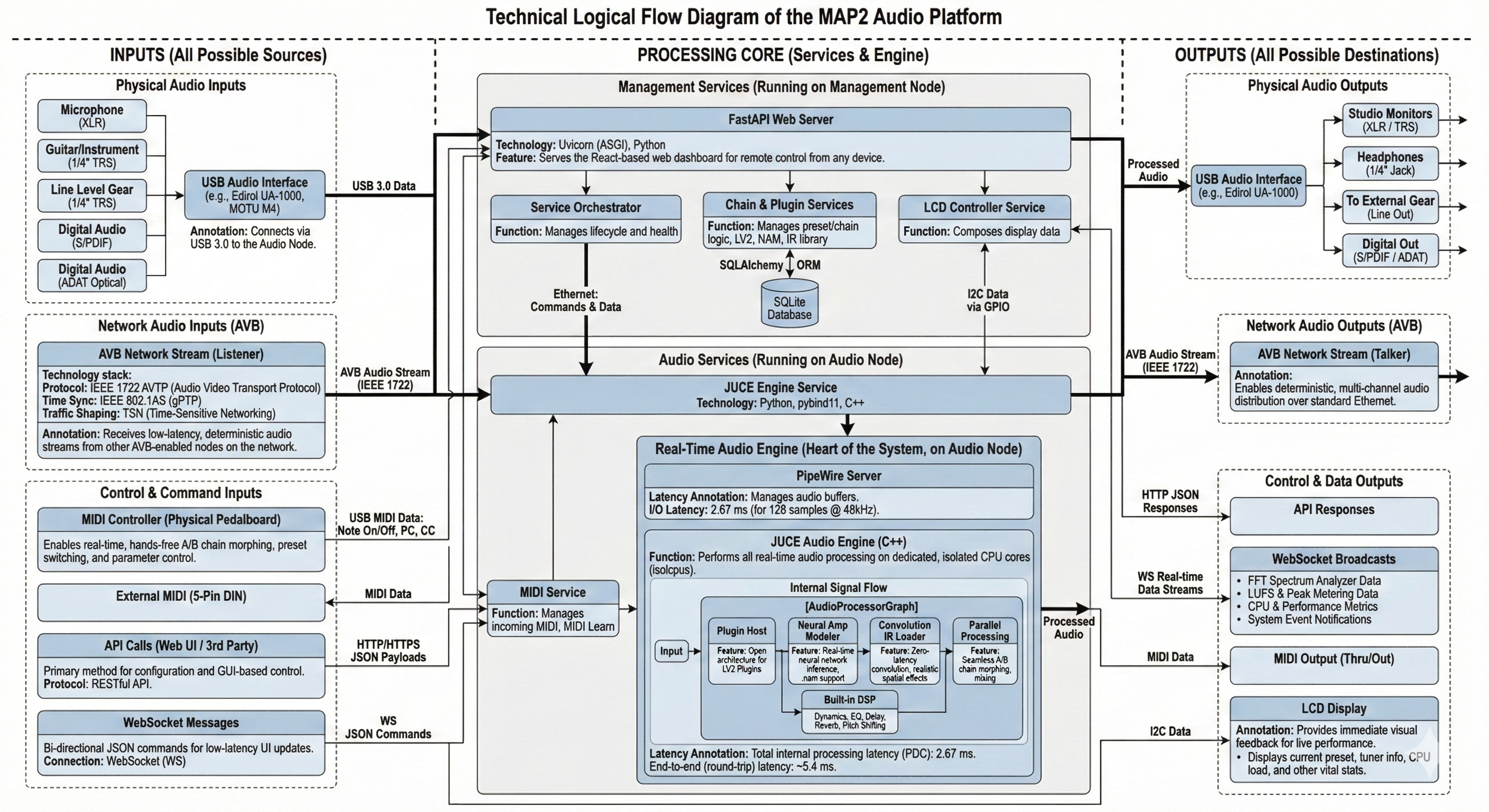

Headless stage processor

A small x86_64 host running fixed signal paths, preset recall, MIDI control, and remote browser management without a local screen.

Rack and installed-sound node

A service-managed Linux processor that boots into known state, exposes remote control, and stays stable through long operating windows.

Open DSP development bench

A working platform for validating callback behavior, plugin hosting, routing policy, and Linux audio tuning on real hardware.

MIDI and control hub

A central node for controller mapping, automation, preset changes, and API-based coordination with external systems.

AVB-aware network audio platform

A Linux system for studying and extending timing-sensitive audio-over-Ethernet workflows using inspectable services and code.

Single-box appliance deployment

One machine running engine, control plane, and operator surfaces together when simplicity matters more than role separation.

Split-node system design

Separate audio execution from control and monitoring when lower jitter and clearer service boundaries are required.

Long-run qualification

A practical target for validating xruns, callback drift, hardware behavior, and unattended runtime stability over sustained sessions.

Inspectable open appliance

A system that behaves like dedicated hardware while keeping the entire signal path, service graph, and control model visible.